|

8/15/2023 0 Comments Amazon redshift spectrum vs athena

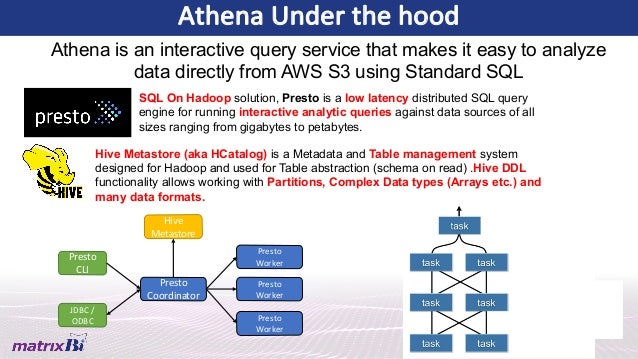

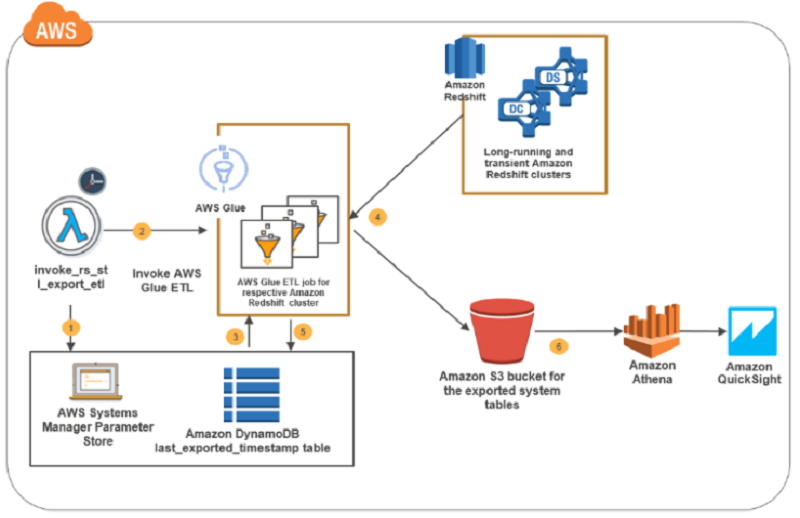

However, Athena may not be as performant as Redshift Spectrum for complex queries and large datasets. With Athena, users can create tables and views, perform complex joins, and use window functions to analyse data. Use cases for optimal performanceĪWS Athena is optimised for ad hoc queries and interactive analysis, and can return results within seconds or minutes. When you need a boost in power, e.g., when results are needed quickly or while running large, complicated queries, you can increase the Redshift cluster size, even though this will be expensive in the long run. This means that you can theoretically increase Redshift performance by adding resources to Redshift. The resources for Redshift Spectrum are assigned according to the size of the Redshift cluster. In essence, the only way to increase Athena performance is by optimising data (partitioning, compression, etc.). You may experience a drop in performance, especially during peak usage times due to the sharing of resources. It uses pooled resources as allocated by AWS, which means that performance can vary based on usage. Resource provisioningĪWS Athena provides no control over resource provisioning. Redshift Spectrum allows users to query data stored in both Amazon S3 and Redshift clusters using SQL, providing a unified view of all data. Redshift Spectrum, on the other hand, is a part of Amazon Redshift, a data warehouse service also offered by AWS, and can’t be used independently. It does not require any infrastructure setup or management and is designed to handle ad hoc queries and data exploration. Service infrastructureĪWS Athena is a serverless interactive query service that allows users to analyse data stored in Amazon S3 using standard SQL queries. Although both services offer similar capabilities, there are some key differences that set them apart. If you don't want to implement S3 copying yourself, Firehose provides an alternative for that.AWS Athena and AWS Redshift Spectrum are query services offered by Amazon Web Services (AWS) for processing and analysing large amounts of data in a cost-effective and efficient manner. This is also how Kinesis Firehose loads data into Redshift. It is multiple magnitudes faster than JDBC which I found only good for testing with small amounts of data. In my experience the best way to load data to Redshift is first to store it to S3 and then use COPY. The data partitioning plays a big role in terms of performance, but schema can be designed in many ways to suit your use-case. On the other hand, Redshift is a PostgreSQL based data warehouse which is much more complicated and flexible than Athena. If you mostly need to access the data just one way (e.g. The latter key schema would be the other way around. If you wanted to search only by type x but don't know the date, it would require a full bucket scan.

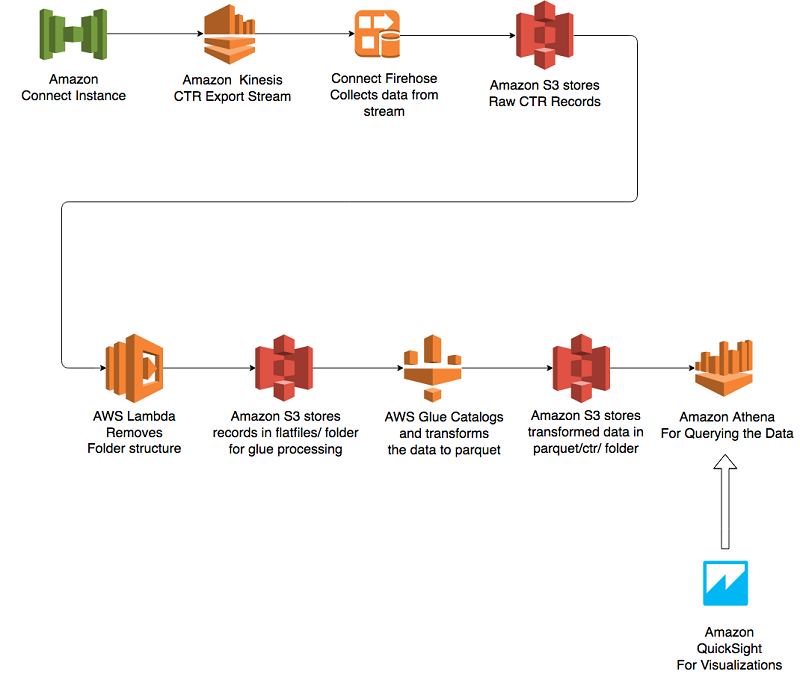

The former key structure allows you to limit the amount of data to be scanned by date or date and type but not type alone. Let's assume you have event type and time in events. Athena directly uses S3 key structure to limit the amount of data to be scanned. Which one to use depends on your data and access patterns. This is dependent on your use case (volumes of data and types of query that you need to run). You MAY NOT need to convert to parquet, if you use the right partitioning structure (s3 folders) and gzip the data then Athena/spectrum then performance can be good enough without the complexity of conversion to parquet. Map the parquet in to Athena and Redshift Spectrum.ĮVENTS -> STORE IT IN S3 -> HIVE to convert to parquet -> Use directly in AthenaĮVENTS -> STORE IT IN S3 -> HIVE to convert to parquet -> Use directly in Redshift using Redshift Spectrum AWS Glue Crawler can be a great way to create the metadata needed to.Redshift to bring the data in if you need to. View against your parquet tables, then if necessary a CTAS within If you want to use Redshift, then use Redshift spectrum to set up a.

Athena is great when used against parquet, so you don't need to use.You don't have to use spark to convert to parquet, there is also the option of using hive.Spark-Redshift works fine but is a complex solution.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed